By Alex Thaman, CTO at Andesite

Enterprise organizations are dealing with an unprecedented volume of increasingly dense and complex data. SecOps teams must determine the best way to collect, organize, and use that data so they can identify, prioritize, and respond to threats efficiently and effectively.

The lack of data management solutions that are both scalable and cost-effective often leads to a trade-off between visibility, latency, and costs. To optimize data architecture for SecOps, organizations need to re-think their approach to data storage, management, and access, and consider moving to a modern, modular stack.

In my conversations with security leaders, many are frustrated with how much their SIEM costs but continue to pay because they don’t see another easily manageable path to reduce risk. However, modern data architectures and AI technology make it possible to break out of this cycle.

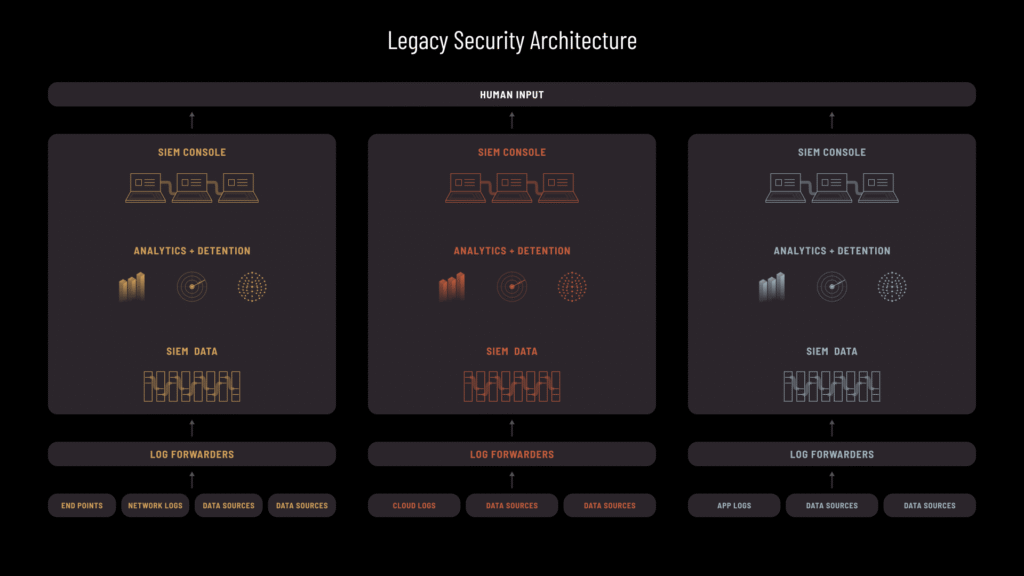

The Problem with Legacy Solutions

For cybersecurity, data architecture involves the underlying framework for how data is collected, managed, and used. Building a robust architecture requires solid understanding of the ways data from a multitude of sources will be used for analytics and decision-making. It cannot be optimized in isolation from these needs.

A primary SecOps challenge is the proliferation of products that were developed when data was much less complex. Attacks were also less sophisticated — for example, living-off-the-land techniques involving persistent threats over time only became prevalent a decade ago. Our industry has favored and incentivized point-products with targeted solutions, leading to further data sprawl. But attacks that span long periods of time or involve lateral movement are incredibly difficult to track with simple point solutions that don’t connect the data.

Twenty years ago, the standard way to collect and analyze large volumes of data was through a relational database architecture, often managed by a database admin (DBA). Lacking the resources to tailor these complex solutions precisely, many organizations opted for SIEMs that can both store data and act as an analyst interface.

SIEMs were initially created to review application data logs. Over time, we started filtering additional types of data through them. But traditional SIEMS are not highly scalable, certainly not in a cost-effective way.

While the SIEM is still widely used, the data realities it was architected for are outdated. Today’s SOC needs vastly exceed basic log storage. Continuing to use a single, simplified architecture leads to prohibitively high costs, which forces the inevitable trade-off between access to broader insights vs. the costs of managing that data.

Delayed migration to better systems due to cost and change management fears causes further trade-offs between storage methods, query latency, and volume. This requires the architecture to be tailored to various use cases — for example, low-latency BI dashboards or high-latency bulk data science analysis. Many CISOs choose what data to stream into systems based on cost, which may increase risk. However, without the ability to quantify that risk, they are essentially flying blind.

The bottom line: while the SIEM may still be the best solution for collecting, organizing, and using some data, especially medium scale event logs, it falls short of what’s needed for SecOps today.

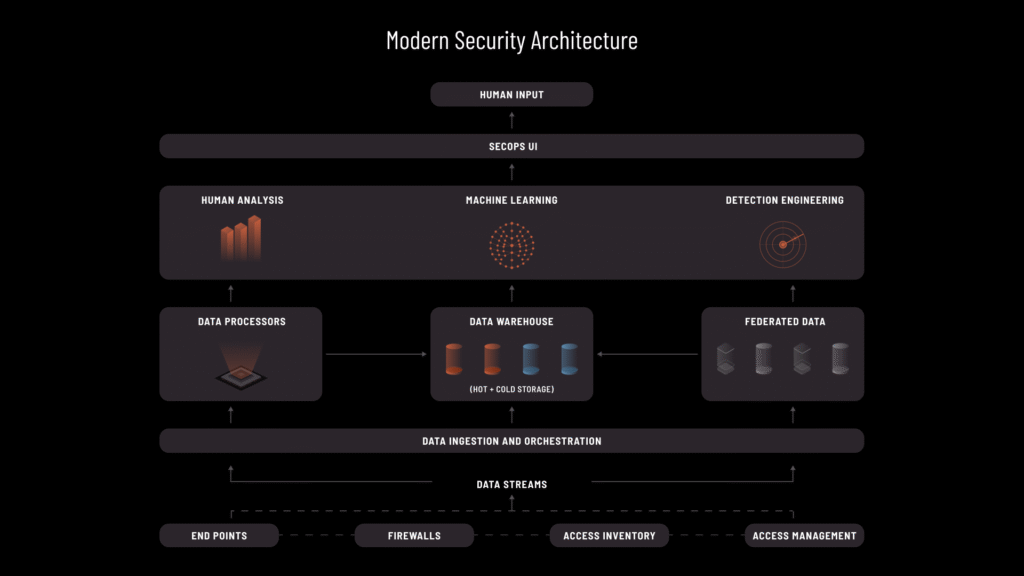

Building a Layered Architecture

The difficulty separating bodies of security analysis work leads to an indiscriminate single data store model. To overcome scalability and cost issues, we have to separate data architecture from the tools and analytics using the data, which tend to be closely coupled.

Modern tech stacks separate the data warehouse and analytical layer to consider who or what analyzes the data — machines, algorithms, or humans. Large organizations are adopting a layered approach, with architecture that federates data across the organization.

Another way to think about this is as a “satellite” model, combining small and large systems with many “satellites” of data orbiting around them. Different data can be filtered into different solutions, depending on what type of analysis you’re performing.

For example, if triaging a single alert in near real time, you need immediate access to a small amount of data across multiple tools all at once. When looking for attacks spanning a long timeframe, you could sacrifice some analysis speed for data completeness. Perhaps you want to correlate logs from today with emails, asset inventory, or other data points to answer complex “who” questions. This can’t be easily done in one system and may require additional ways to relate all of the data. Yet another challenge might be evaluating alert and resolution patterns to understand how to optimize detection.

Supporting all of these functions well requires a layered architecture that lends itself to each kind of analysis. You’ll also want to optimize schemas, aggregations, and other variables as you scale. A modern approach to data architecture includes all of these systems as well as whatever solution connects them, allowing more granular management of data movement and access. This is how organizations can solve for storage costs without trading off query latency or data volumes.

A Modular Approach

The shift to a more modular framework is a natural progression. With data coming from so many different places, it makes sense to use multiple specialized systems. However, it’s not an easy transition. Even at the largest, most sophisticated companies, designing such complex, multi-layered data systems is demanding — creating a significant security challenge.

Companies that package a single data platform and sell it as a product lack the flexibility to meet different needs. Solutions that excel at simple data analysis on a massive scale may work well for easy tasks but are less adept at advanced analysis at reasonable scale and cost. Some products are optimized for horizontal scaling by adding more machines, while others may be fundamentally superior in efficiently storing and processing data but have poor accessibility.

Compounding this complexity is a growing need to analyze data at, or closer to, the edge, in real time, without waiting for log ingestion. Some solutions address this by determining which data is and isn’t worth capturing. By doing more work at the point of data creation, you can bring less data into the central systems for analysis.

The Path Forward: Modern Data Architecture, A Proactive Approach

Cybersecurity has so far been bad at asking better questions of data, resorting to primitive and use-case-dependent analytics like simple rule matching, probably due to the difficulty of making advanced analytics repeatable for scaling SOC operations. To overcome critical challenges, we must focus on how to use data for better protection and response while also shifting from a reactive stance to being more proactive and protective — both of which start with better data architecture.

Adopting a modern, modular approach to data architecture with a single security-centric decision layer on top empowers analysts to manage and access data more efficiently and effectively, without prohibitive costs or scalability issues.